Almost 3 years ago, McKinsey published a report arguing that limiting global temperature rises to 1.5 degrees Celsius above pre-industrial levels was “technically achievable,” but that the “math is daunting.” Indeed, when the 1.5°C figure was agreed to at the 2015 Paris climate conference, the assumption was that emissions would peak before 2025, and then fall 43 percent by 2030.

Given that 2022 saw the highest emissions ever—36.8 gigatons—the math is now more daunting still: cuts would need to be greater, and faster, than envisioned in Paris. Perhaps that is why the Intergovernmental Panel on Climate Change (IPCC) noted March 20 (with “high confidence”) that it was “likely that warming will exceed 1.5°C during the 21st century.”

I agree with that gloomy assessment. Given the rate of progress so far, 1.5°C looks all but impossible. That puts me in the company of people like Bill Gates; the Economist; the Australian Academy of Science, and apparently many IPCC scientists. McKinsey has estimated that even if all countries deliver on their net zero commitments, temperatures will likely be 1.7°C higher in 2100.

In October, the UN Environment Program argued that there was “no credible pathway to 1.5°C in place” and called for “an urgent system-wide transformation” to change the trajectory. Among the changes it considers necessary: carbon taxes, land use reform, dietary changes in which individuals “consume food for environmental sustainability and carbon reduction,” investment of $4 trillion to $6 trillion a year; applying current technology to all new buildings; no new fossil fuel infrastructure. And so on.

Let’s assume that the UNEP is right. What are the chances of all this happening in the next few years? Or, indeed, any of it? President Obama’s former science adviser, Daniel Schrag, put it this way: “ Who believes that we can halve global emissions by 2030?... It’s so far from reality that it’s kind of absurd.”

Having a goal is useful, concentrating minds and organizing effort. And I think that has been the case with 1.5°C, or recent commitments to get to net zero. Targets create a sense of urgency that has led to real progress on decarbonization.

The 2020 McKinsey report set out how to get on the 1.5°C pathway, and was careful to note that this was not a description of probability or reality but “a picture of a world that could be.” Three years later, that “world that could be” looks even more remote.

Consider the United States, the world’s second-largest emitter. In 2021, 79 percent of primary energy demand (see chart) was met by fossil fuels, about the same as a decade before. Globally, the figures are similar, with renewables accounting for just 12.5 percent of consumption and low-emissions nuclear another 4 percent. Those numbers would have to basically reverse in the next decade or so to get on track. I don’t see how that can happen.

Credit: Energy Information Administration

But even if 1.5°C is improbable in the short term, that doesn’t mean that missing the target won’t have consequences. And it certainly doesn’t mean giving up on addressing climate change. And in fact, there are some positive trends. Many companies are developing comprehensive plans for achieving net-zero emissions and are making those plans part of their long-term strategy. Moreover, while global emissions grew 0.9 percent in 2022, that was much less than GDP growth (3.2 percent). It’s worth noting, too, that much of the increase came from switching from gas to coal in response to the Russian invasion of Ukraine; that is the kind of supply shock that can be reversed. The point is that growth and emissions no longer move in lockstep; rather the opposite. That is critical because poorer countries are never going to take serious climate action if they believe it threatens their future prosperity.

Another implication is that limiting emissions means addressing the use of fossil fuels. As noted, even with the substantial rise in the use of renewables, coal, gas, and oil are still the core of the global energy system. They cannot be wished away. Perhaps it is time to think differently—that is, making fossil fuels more emissions efficient, by using carbon capture or other technologies; cutting methane emissions; and electrifying oil and gas operations. This is not popular among many climate advocates, who would prefer to see fossil fuels “stay in the ground.” That just isn’t happening. The much likelier scenario is that they are gradually displaced. McKinsey projects peak oil demand later this decade, for example, and for gas, maybe sometime in the late 2030s. Even after the peak, though, oil and gas will still be important for decades.

Second, in the longer term, it may be possible to get back onto 1.5°C if, in addition to reducing emissions, we actually remove them from the atmosphere, in the form of “negative emissions,” such as direct air capture and bioenergy with carbon capture and storage in power and heavy industry. The IPCC itself assumed negative emissions would play a major role in reaching the 1.5°C target; in fact, because of cost and deployment problems, it’s been tiny.

Finally, as I have argued before, it’s hard to see how we limit warming even to 2°C without more nuclear power, which can provide low-emissions energy 24/7, and is the largest single source of such power right now.

None of these things is particularly popular; none get the publicity of things like a cool new electric truck or an offshore wind farm (of which two are operating now in the United States, generating enough power for about 20,000 homes; another 40 are in development). And we cannot assume fast development of offshore wind. NIMBY concerns have already derailed some high-profile projects, and are also emerging in regard to land-based wind farms.

Carbon capture, negative emissions, and nuclear will have to face NIMBY, too. But they all have the potential to move the needle on emissions. Think of the potential if fast-growing India and China, for example, were to develop an assembly line of small nuclear reactors. Of course, the economics have to make sense—something that is true for all climate-change technologies.

And as the UN points out, there needs to be progress on other issues, such as food, buildings, and finance. I don’t think we can assume that such progress will happen on a massive scale in the next few years; the actual record since Paris demonstrates the opposite. That is troubling: the IPCC notes that the risks of abrupt and damaging impacts, such as flooding and crop yields, rise “with every increment of global warming.” But it is the reality.

There is one way to get us to 1.5°C, although not in the Paris timeframe: a radical acceleration of innovation. The approaches being scaled now, such as wind, solar, and batteries, are the same ideas that were being discussed 30 years ago. We are benefiting from long-term, incremental improvements, not disruptive innovation. To move the ball down the field quickly, though, we need to complete a Hail Mary pass.

It’s a long shot. But we’re entering an era of accelerated innovation, driven by advanced computing, artificial intelligence, and machine learning that could narrow the odds. For example, could carbon nanotubes displace demand for high-emissions steel? Might it be possible to store carbon deep in the ocean? Could geo-engineering bend the curve?

I believe that, on the whole, the world is serious about climate change. I am certain that the energy transition is happening. But I don’t think we are anywhere near to being on track to hit the 1.5°C target. And I don’t see how doing more of the same will get us there.

------

Scott Nyquist is a senior advisor at McKinsey & Company and vice chairman, Houston Energy Transition Initiative of the Greater Houston Partnership. The views expressed herein are Nyquist's own and not those of McKinsey & Company or of the Greater Houston Partnership. This article originally ran on LinkedIn.

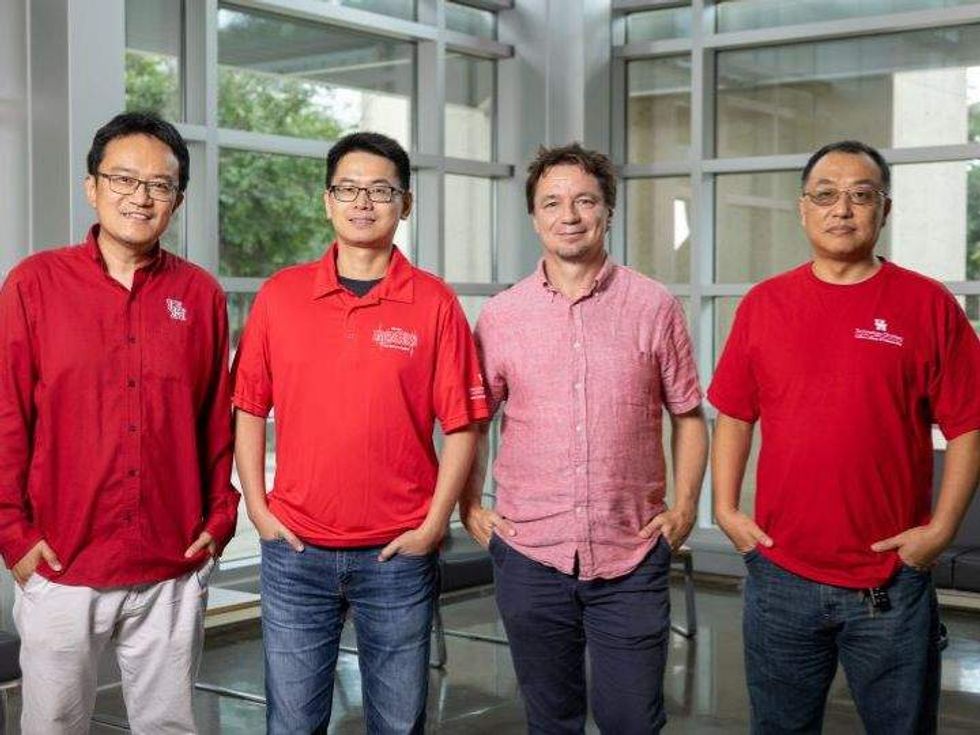

The University of Houston team: Xiaonan Shan, associate professor electrical and computing engineering, Jiefu Chen, associate professor of electrical and computer engineering, Lars Grabow, professor of chemical and biomolecular engineering, and Xuquing Wu, associate professor of information science technology. Photo via UH.edu

The University of Houston team: Xiaonan Shan, associate professor electrical and computing engineering, Jiefu Chen, associate professor of electrical and computer engineering, Lars Grabow, professor of chemical and biomolecular engineering, and Xuquing Wu, associate professor of information science technology. Photo via UH.edu